The countdown is on. Three days until my iClone trial version expires, and I don’t intend to waste a single minute.

For the past couple of days I’ve been playing around with a new character. Meet Finn Wilde, star of Amanda Borenstadt’s novel Sygyzy:

Yesterday Amanda gave me the thumbs-up for this “actor”. I learned from making the Stonehaven machinima that casting is critical–the face we choose for the video will become the face of the novel for many people. I’m quite taken with this fellow–I wish he were real. I would definitely be a fan.

The process of character creation begins–at least for me–with a conversation. Amanda and I emailed back and forth a few times discussing her vision for the cast. Months earlier, she mentioned that the role of Finn would ideally be given to a young Russell Crowe. No problem, I thought. . .until I started hunting for high resolution photos of a young Russell Crowe.

It has never been my intention to hijack a real actor’s face for my project. But selecting a real person as a model for a character is the quickest and easiest way for a writer to communicate their ideas to me. I had planned to use a photo of young Russell Crowe to “skin” the iClone puppet, then modify bone structure so it didn’t look enough like Russell Crowe to invite a lawsuit. Alas, no usable photos of this man in his youth seem to exist on the Net. Yes, I did find some early pictures, but nothing of a quality I can work with. Face mapping photos must be high resolution, full face frontal with even lighting (otherwise one side of the puppet’s face will be darker than the other) and no teeth showing. Mug shots would be ideal.

After days of searching, I finally discovered that Russell Crowe has a lookalike. A young actor named Ben McKenzie has often been compared to Crowe, as in the photo below:

So I revised my search and found this one of Ben:

which I altered in my graphics editor to become an iClone texture:

I imported this texture image into iClone and began the process of creating Finn Wilde.

Below is an embedded link to a 30-second “audition” of this character. Don’t expect to be wowed. . .at least not at first. Once I explain what’s important about the scene, I’m sure my excitement over iClone will make more sense.

Like before, because I rendered this clip from a trial version of iClone, that awful, ugly watermark is stamped all over the video. Also, please note that the bleedthrough (tattering) around the edges of the shirt are related to an improper bone movement I made with the right shoulder. You can’t really see the bad placement in the video, but let’s just say it was another lesson learned. 😉

If you watch carefully, you’ll see that as the camera pans around him, one corner of his mouth twitches upward, then a slow smile spreads across his face. This would be absolutely impossible with Sims 2.

In Finn’s mouth are top teeth (one of the complaints about Sims was that only their bottom teeth showed.) They are dazzling white in this clip, but I can turn them any color I want by simply moving a slider in iClone. The amazing thing, at least to me, is that I had full control over this facial expression. I created it “from scratch” by working with a face key. This allowed me to first move each muscle group that I thought should be involved in a smiling animation, then go back into the “detail” panel and fine tune each feature.

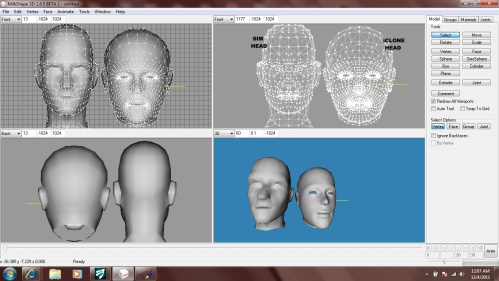

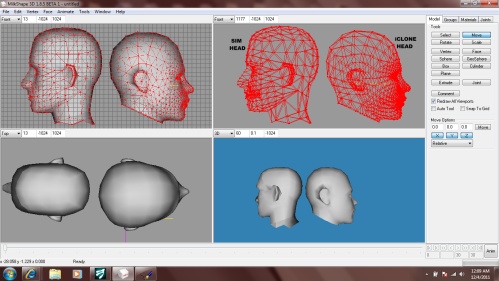

This is where iClone really starts flexing its muscle. In the screenshots below, you’ll see the four-viewport window of Milkshape, where I’ve imported a Sim head as well as an iClone puppet head. The thing I want you to notice is the number of white dots in each mesh. Each white dot is what’s called a “vertice.” Each vertice is assigned to a “bone” that controls its movement when animating.

With only a glance, you should be able to note that the iClone head has a quite a few more vertices than the Sim head. Why does this matter?

Connected to each dot in the mesh is a line. These lines form tiny triangles that make up the mesh. Each triangle is called a “face,” or a polygon (poly.) If a mesh has lots of faces, or a “high poly count,” it requires much effort for a computer to render that graphic on the screen. Sims 2 is a game, and it’s played in “real time.” In other words, when you click on a character and tell it to go pee, you expect it to go pee immediately. If the Sims 2 bodies had a high poly count, it would take so much time for the computer to render each frame of their movement that you’d get what’s called “lag.” Lag is when the computer seems to freeze and think about what it should do next while the game actually continues at normal speed in the background. Say, for instance, you were playing a hunting game that required you to shoot a moving target. If the objects in the game had such high poly counts that they created lag, you might aim at the target, but by the time you had it in your crosshairs on screen, the game would register it being on the other side of the meadow. You’d miss your shot every time.

Game designers learned long ago that if their product only appeals to people with high-end gaming systems, they’ll exclude most of their market. So to combat the issue of lag and outrageous system requirements, they create most objects in their game as “low poly” items. Yes, you sacrifice detail. But when you aim and shoot at that low-detail deer, he actually falls down.

XBox and similar systems are made specifically for gaming. Therefore polycount is not an issue, and those fantastic, lifelike graphics are possible. For those of us who prefer computer interfaces, we must sacrifice some of the stunning visuals. Unless, of course, we’re fortunate enough to own a very expensive computer with a high-end graphics card and processor. Dear Santa. . . .

Sims 2 machinima directors are notorious for using custom content. Dissatisfied with the cartoonish appearance of the base game, they’ve crammed their “downloads” folder with items created by the community itself, not Maxis. Most of this custom content has a much higher polycount and was never intended for regular gameplay. It was for filmmaking only. As a director, I learned quickly that filming in real time rendered choppy, laggy animation that no video editor could remedy. A trick of the trade? Film in slow motion. Veeeerrrrryyyy slloooooooow motion, as in minus-10X the normal speed.

So if I knew all these workarounds for lag, why couldn’t I just mesh a whole Sim and make it do whatever I want?

To some degree, this has been done. New feet, new bodybuilder types, and fat meshes have all been created by the community. Yet they still use the same skeleton, which has a set number of bones. The game simply will not recognize a different bone hierarchy. So we might retexture faces and heads, but there’s no point designing a new mesh because no matter how many bones we add in Milkshape, the game will only animate the ones Maxis created. This is why I could do absolutely NOTHING about goofy Sim expressions, floating teeth, or half the stuff non-gamers complained about.

iClone is different. There is no “game” anywhere in it. The puppets have zero autonomy. There are no behavior algorithms constantly running in the background. But the visual detail is second to none. Look again at those screenshots of the head meshes. iClone has assigned bones to each and every one of those little dots and given us full control over how they’re used.

Doesn’t this create lag? Damn right it does. My poor laptop can’t even process a full 3D scene plus an avatar. It simply freezes. And yes, I can see this becoming a problem in the future. But iClone saw this coming and built “workarounds” directly into the software. During set creation and script-building, we have the option of turning off pixel shaders (a huge resource hog) and even viewing the set as a wireframe. This eliminates lag altogether during this phase. Then, when it’s time to film the scene, iClone provided this handy-dandy “by frame” option that renders each frame completely before moving to the next. This takes forever, but once it’s done, playback is flawless.

Now. . .I have other characters to create and audition for Amanda. Tom is next. He’s how I will spend my afternoon. 🙂

11 responses to “Meet Finn”

This was fascinating. I would love to play around with iClone, but that will have to be another project for another time. Will you be purchasing the software?

Hi, Rebecca! Good to see you here. 🙂

The software. . .oooh, yes. The software. Let me say I definitely intend to own a legit copy within the next few months.

Despite all the IWW the ruckus over Carol’s Stonehaven video, I think most people missed the fact that the machinma was made as a contest entry. This contest ran from the end of August until its November 12th deadline. Now all the people who entered it (there were 32, I think) are holding their collective breath waiting for winners to be announced.

The prize packages for first, second, and third place include the full version of iClone along with assorted add-on packs and software like Sony Vegas Pro 10. So I don’t want to buy anything until winners are announced. Do I think I have a shot at placing in the top three? Yes, I actually do. There are some VERY strong entries this year, so I don’t by any means think I have this one in the bag. But I do think “Stonehaven” will hold its own among them, and contest results will depend heavily on the judges’ personal tastes.

In the event Stonehaven doesn’t place in the top three, I have mailed my wish list to Santa. 😉

This was amazing! You did a great job creating him without falling into the Uncanny Valley. I can’t wait to see what comes of this. You’re crazy talented.

LOL! I don’t know so much about the “talented,” but you sure got the “crazy” part right!

Thank you for that, and thank you for commenting. Your voice is always needed and much welcomed here. 🙂

You need a new laptop! And your own iClone software, and some employees to do some of your grunt work, and and and…. hey, when your son comes to live with you this summer, maybe HE could do some of the work? Or how about your daughter? 🙂 I mean the laborious things about the creation of these characters. But if they just tackle the laundry, dishes, etc, and grocery shopping and cooking, you can keep going solo. Oh: above all, WOW, oh wow, you’re doing great things!! Finn is a hunk. 🙂 You caught just the twinkle of mischief a wolf-boy should have. LOVE IT

Ohhh, yeah. I agree completely that Finn is a hunk. Why, oh why must he only be a 3D puppet?

That twinkle of mischief? No idea where that came from. You see the image I started with. Not too twinkly, if you ask me–although I think Ben McKenzie is quite hunkish himself. 😉 These puppets may never get the chance to develop personality quirks and the apparent strong will that Sims can demonstrate, but Finn’s character certainly has shown me that doesn’t mean they can’t (or won’t) be unique.

Go Finn!

I could just stare at his pretty face all day. . . .

He look sooooo gooooooood!!!!!!!!!!!!!

You’ve done an amazing job at making him come alive, right off the page! Thank you! 🙂

Thank you for letting us ‘ride along’. It’s a little like watching a baby walk and see him grow into an Olympic sprinter at warp speed.

Sharon

I’m astounded at your skill and I’ve fallen for Finn. The research you did to find the real man is awsome.

Great job you’ve done there and I appreciated the mini-tutorial:) So this is Finn…I had read the online sample of Amanda’s book, “Syzygy”. (I also looked up the word and listened to an online pronunciation) Now I’m all the more interested. The story’s very interesting so far and I’m looking forward to the machinima.

Now please allow me a little indulgence – “Russel Crowe! Oh my gosh! My gladiator!

Okay, back to Finn: I just love his smile. His face has ‘character’. This really makes the sims’ expressions look goofy BUT….

I really want to see what comes out when your talent goes into iClone:)

Hi, Odara! I’m re-reading Syzygy now, freshening up my memory for the trailer. It’s just as good, maybe even better, the second time through because I catch all the nuances. 😉

You hit upon the on thing that makes iClone such a draw for me over any other platform: control over facial expressions. I’ve spent more time on puppet faces, learning how the muscle groups work, than I have on any other aspect of the software. Now my trial period has expired, so I guess I’ll have to wait ’til Christmas to keep going. But because my daughter is a full time college student, I was able to procure a legit product key for the educational version of 3DS Max, which is a professional modeling tool like Milkshape, but on a completely different level. 3DS Max has plug-ins that work directly with iClone, like the Unimesh between Sims 2 and Milkshape. So I’ll be devoting the next few weeks to learning Max, so I can pick up where I left off meshing cool new stuff for my projects.

In ways, though, I feel like a traitor. Dumping Sims 2? Isn’t that what so many other directors did and left the community in shambles? In my heart, I know I’m not dumping anything. I’m a Sims 2 machinima director who also works with another platform. It would be wonderful if any success I have using iClone as a commercial tool can be used to further the cause of Sim 2 machinima.